After a few years of desperately trying to avoid a laptop with a dedicated Copilot key, the time has finally arrived. I needed a new laptop, and, of course, that came with the dedicated Copilot key where the right-CTRL button once was.

Now, I don’t completely mind it being there, except for those moments where I accidentally press it and awaken Copilot from its slumber. But that made me think: what if there was a better way to use the Copilot key?

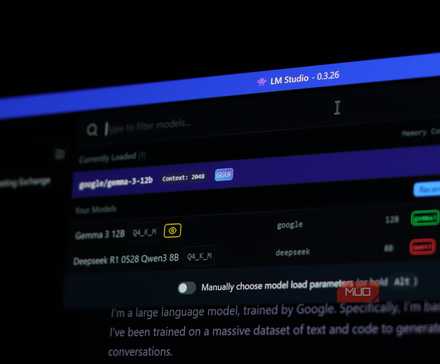

I decided that instead of opening Microsoft’s integrated AI tool, the Copilot key should open LM Studio and let me use a local AI tool instead. And with the help of Power Toys, it was done with minimal fuss.

Use LM Studio for easy local AI management

Local LLMs tailored to your device

There are several different ways you can use a full LLM on your device. For me, the easiest is LM Studio, a free app that makes running any LLM on your device wonderfully easy.

It also checks your hardware and suggests LLMs that your device should be able to handle. For example, I’m writing this on my ASUS Zenbook S14 with 32GB RAM and an Intel Core Ultra 7 258V CPU. It does have a 50 TOPS NPU, but LM Studio doesn’t currently support NPU acceleration, so the dedicated AI hardware isn’t being used.

So, LM Studio suggests LLMs that work with your hardware. In my case, it suggests AI models that can be fully loaded into the available memory and can use the limited amount of GPU processing available.

I’ve tried a few different options, but Qwen 3.5-9b (developed by Chinese tech giant Alibaba) works best for my requirements and doesn’t hallucinate much. OpenAI’s open-source gpt-oss-20b model has also worked well.

- Head to LM Studio and download the latest version. Once downloaded, install LM Studio following the on-screen instructions. It’s a standard installation process.

- Open LM Studio. You don’t need to create an LM Studio account to use local AI on your machine, so that part of the process is optional.

- Select the Model Search icon in the menu on the left. The Model Search dialog will open and suggest AI models that fit your device. Once you know what you want, select Download, and wait for it to complete.

- Once it’s downloaded, you can load the AI model into memory on your device and start using it.

I’d suggest trying a few different models to see what works for your requirements. Even though you can load a model into memory and use it, you may find the output too slow, or in some cases, it may give you outdated information. Or, as I found with Nvidia’s Nemotron 3 Nano 4B model, hallucinate repeatedly.

I tried running a chatbot on my old computer hardware and it actually worked

You don’t need to fork out for expensive hardware to run an AI on your PC.

Customize the Copilot key with Power Toys

Microsoft’s open source tool is full of useful apps

Next up, you need to download Microsoft’s open-source Power Toys Windows utilities. This is a set of free apps that bring extra functionality to Windows, much of which should arguably feature in the default operating system installation.

The tool we want to use is Keyboard Manager, which is a super-easy way to reprogram your keyboard. If you’ve ever mapped controller buttons or keyboard inputs in a video game, it’s a very similar process.

- Download Power Toys and complete the installation process.

- Open Power Toys and input keyboard manager in the search bar. Select Keyboard Manager.

- Now, set the Keyboard Manager toggle to On, then select Open editor > Add new remapping.

The New remapping options feature two panels: one for the key you want to remap, and one for the key, app, URL, or shortcut you want to switch it to. You can even have it insert text or complete a text string, which is useful for anything you frequently type.

- On the left, under Trigger, select the empty box, then press the Copilot key. It should register with its official key bind.

- Next, move to the Action panel and select Open app from the dropdown menu. This will reveal some information boxes.

- Input the Program path for LM Studio in the first box. That includes pointing the path at the LM Studio executable, not just the path where the executable is found. For example, on my laptop, the path is C:UsersGavinAppDataLocalProgramsLM StudioLMStudio.exe

- Save your remapped key, and you’re good to go.

Now, when you press the Copilot key, you’ll summon your personal, private, local AI, rather than awakening Copilot from its slumber.

I’ll never pay for AI again

AI doesn’t have to cost you a dime—local models are fast, private, and finally worth switching to.

Why I don’t want the Copilot key to open Copilot

Nopilot, no

Microsoft was one of the first tech companies to fully embrace AI, announcing that it would replace its iconic Windows key with the Copilot key in early 2024, then pushing Copilot into every app and service it possibly could.

Forcing Copilot into every nook and cranny of Windows, Office, GitHub, and more hasn’t gone down well with most, but thankfully, removing Copilot and other AI features from Windows can be done with a little effort.

In the end, the Copilot key didn’t replace the Windows key. They both live on your keyboard, with the Windows key retaining its overall usefulness… and the Copilot key seemingly limited to just opening Copilot. Microsoft has even admitted that its AI push is problematic.

In theory, having an AI option baked into your operating system is great. But the reality is that it’s completely pointless when you don’t have an internet connection, severely limiting its usefulness versus a truly on-device, local AI tool.

Furthermore, every prompt you send is sent to Microsoft, processed on its servers, logged, and then sent back to you. Using a local large language model (LLM) also restores your privacy, keeping your prompts and responses on your device at all times, away from the prying eyes of big tech.

Finally, you won’t run into any usage limitations with your local LLM. It’s yours, offline, all the time. It doesn’t phone home to check if you’ve sent 300 messages in an hour and then degrade your experience.

That alone makes a local LLM worth your time.

- OS

-

Windows, macOS, Linux

- Developer

-

Element Labs

- Price model

-

Free

LM Studio is a desktop app for running large language models locally on your PC. It supports popular open-weight models, offers an intuitive chat interface, and gives you full control over privacy, performance, and offline use without relying on cloud-based AI services.