Everyone has an opinion about the best AI chat. Most opinions, unfortunately, are based on vibes, company-written benchmarks, or whichever model impressed someone that day. I wanted a better way to test leading chatbots against my real work, free from my own assumptions.

It turns out that the tool already exists, it’s free, and it changed which AI chatbot I actually reach for every day.

Why most “best AI chatbot” lists don’t actually help you

Your tasks are different from their benchmarks

Most AI chatbot comparisons test the same things: write a poem, explain quantum physics, or solve a math problem. Those types of prompts show general capability but tell you little about whether a model fits your needs.

I write about technology. My real tasks are drafting article sections, summarizing research, rephrasing awkward sentences, and generating quick code snippets. Benchmarks on creative writing or advanced calculus don’t help me.

I needed a way to test top models on my tasks, ideally without knowing which model I used. Bias is real. If I know it’s Claude or ChatGPT, I could subconsciously grade on a curve.

How Chatbot Arena tests AI models without bias

What makes blind testing more reliable than reviews

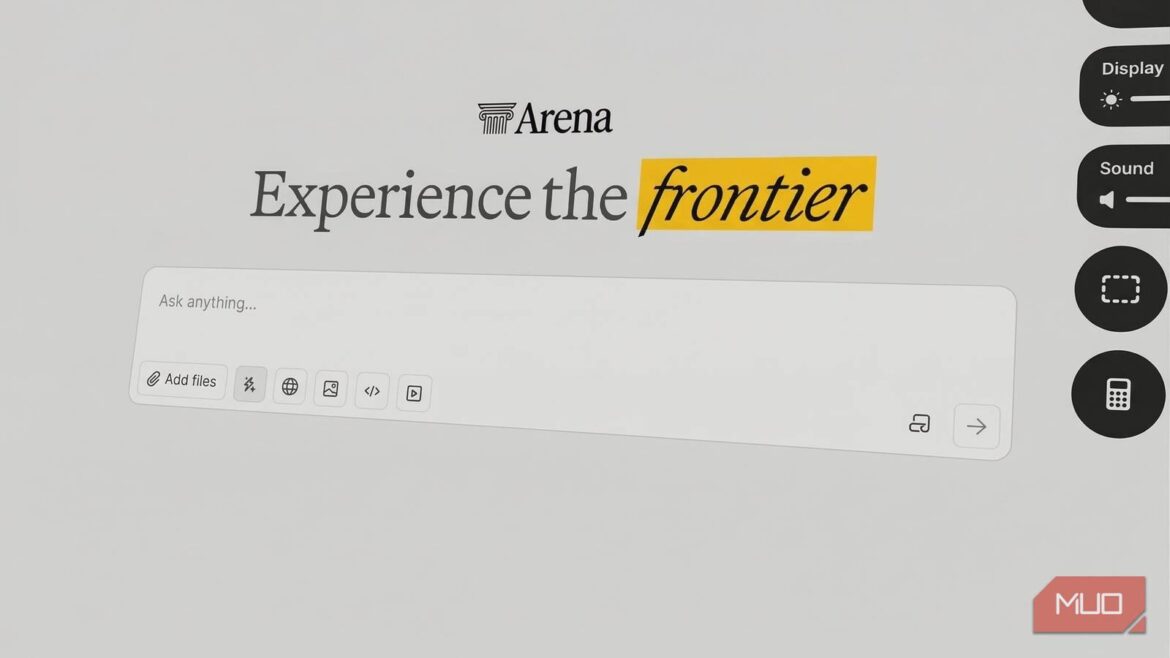

Chatbot Arena from UC Berkeley removes the marketing annoyances. Type a prompt and get two anonymous AI responses side by side. Pick the winner. After voting, the platform reveals which models you compared.

The blind testing is the whole game. You can’t favor a brand you’ve paid for or a model you’ve already decided is best. You’re just comparing two responses to your actual prompt and picking the better one.

The platform tracks votes from millions of users and ranks models using an Elo-style system, like chess. But the global leaderboard isn’t what matters. Run your own tasks and see which model wins for you.

It’s free, no account is required to get started, and it takes about 30 minutes to get a genuine read on which model fits your workflow.

- Developer

-

Anthropic PBC

- Price model

-

Free, subscription available

I ran 40 blind AI match ups on real writing tasks: here’s what happened

The four task categories I used (and why they matter)

I ran four task categories I encounter in real work every week, with 10 match ups per category:

- Writing and editing: Rough draft paragraphs submitted for tightening.

- Research summarization: Long blocks of technical text submitted for clear, reader-friendly summaries.

- Headline and title generation: Given an article topic and angle, which model produced the most compelling options?

- Explaining complicated topics simply: Take something technical and make it understandable without dumbing it down. A core skill for anyone writing about tech.

I tracked my results manually after each reveal, a simple running tally by category; 40 rounds total.

Across all four tasks, Claude came out on top. Forty rounds of blind testing, and one model consistently produced responses I preferred before I knew who wrote them. That’s about as clean a result as this kind of testing can produce.

Gemini 3.1 Pro Preview was a legitimate second. It finished behind the ever-evolving Claude overall, but was the only model that regularly challenged it. In a few writing and summarization match ups, the gap was narrow enough that I hesitated before voting. If you’re a heavy Gemini user, you’re not making a bad choice; you’re just not making the best one, at least for writing-adjacent tasks.

I expected the coding results to be closer, but Claude’s responses were cleaner, better structured, and worked on the first try without fixes for “hallucinated” libraries or broken syntax.

The biggest surprise? The “big names” didn’t always hold up. Even with GPT-4o included, only Claude and Gemini consistently led. Others sometimes did well, but those two outperformed on my professional tasks.

How to find the best AI chatbot for your specific tasks

The four rules that make your results actually meaningful

Go to lmarena.ai and use prompts from actual work. Don’t rely on hypotheticals. Instead, paste in a real email you need to rewrite, a real document to summarize, or a real problem to solve.

Run volume: Aim for at least eight to 10 match ups per task type before deciding. Single match ups are noisy; patterns appear with volume.

Track manually: Keep a simple running tally using something like Apple Notes, a sticky note, whatever. Chatbot Arena doesn’t generate a personal results summary, so manual logging is the way to go.

Trust the hesitation: Notice when you hesitate before voting. This usually means the models are close on that task, useful even without a clear winner.

Claude Opus 4.6 won, but the real winner is the method

While global rankings can generate debate, it’s your personal experimentation that determines which AI model actually maximizes your productivity.

Forty blind match ups with my real work gave me a clear answer: Claude Opus 4.6 performs best for my actual tasks. Not because I expected it to win — I didn’t, but because it consistently outperformed before the reveal.

However, there is one major caveat: speed. In the AI world, the “best” model is a moving target. These models are constantly updated; what leads the pack today might be obsolete in 30 days or even six months. Today, Claude is my winner, but the beauty of Chatbot Arena is that I can rerun this entire experiment next month to see whether a new Gemini update or a surprise GPT release has taken the lead.

Ultimately, blind testing isn’t just about validation. It’s about staying open to discovering new preferences as models evolve.