I have gone through this issue and know how frustrating it can be when you are running a cross-platform matrix that is firing off 60 jobs at once, and you start to see half of your jobs failing with a 403 error code. If you look at the logs, they will tell you “API rate limit exceeded for installation ID”, and now you have no idea what happened to cause your CI to fail on what should have been a simple npm install or checkout step. This is not a flaky test, but it is a direct result of hitting the GitHub API rate limit, and it tends to happen at the most inconvenient times.

The reason for writing this is that the general advice to just use the max-parallel option did not work for me, when I had a matrix with 10 different OS versions and 6 different Python versions all pulling dependencies that utilize the GITHUB_TOKEN to hit the REST API. It took me several frustrating hours of trial-and-error and terminal scrolling to figure out what does actually work for my use case, so here is what I found to be useful.

Quick Summary

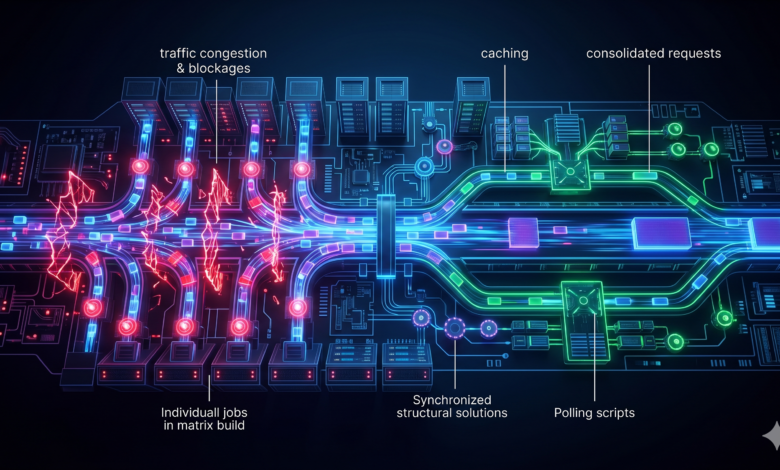

- You will likely hit the primary rate limit for the “installation” because each job runs in parallel, and therefore contributes to your total shared rate limit.

- Structurally combining API calls, using the

max-paralleloption, and caching API calls before using scripts will help to reduce your API call count. - If you still run into the maximum rate limit, you can use a simple polling script that runs until the reset timestamp to ensure your jobs can continue to run.

- For long-term solutions, creating a fine-grained Personal Access Token (PAT) and granting repo‑level access will prevent your pipelines from conflicting over the same token’s budget.

Diagnosing github actions api rate limit exceeded installation matrix Failures

Identify the constraints placed upon the REST API that lead to authentication errors when executing jobs concurrently.

How Matrix Expansions Deplete the GITHUB_TOKEN Quota

When executing the various jobs contained within your Strategy Matrix, you will create a separate GITHUB_TOKEN for each of the jobs. Each of these tokens will be limited to 1000 calls to the GitHub API per hour on a per-repository basis. This may seem like a considerable number until you understand that a single call to actions/checkout (to authenticate and access additional refs) and many toolchain installers each generate multiple REST API calls. A matrix with thirty-six (36) concurrently executed jobs will consume 1000 requests within a few minutes of execution.

The issue becomes even more complicated when you have third-party Actions that do not cache any responses to the requests they generate and continue to request information from the /repos and /installation/repositories endpoints on a continuing basis with each execution of the Action. These requests will consume a portion of the installation limit for the installation that created the token that enabled your Action. For this reason, you see the message “API rate limit exceeded for installation” rather than the generic user rate error message. While the rate limit is based upon the GITHUB_TOKEN, the installation rate limit applies to all repositories that utilize the same Application Installation and therefore if one repository creates excessive API calls, it will lead to issues for other repositories.

Real-World Signals: Identifying the 403 Forbidden Response

You can see the 403 status in the raw log files. You should also receive a message with an X-RateLimit-Reset header and a message indicating the name of the installation.This is the output of gh pr status that was generated by a job running as part of a large matrix of jobs:

##[debug]Evaluating: secrets.GITHUB_TOKEN

gh api /repos/myorg/myrepo/pulls?state=open

gh: HTTP 403: API rate limit exceeded for installation ID 12345678.

Limit: 5000, Used: 5000, Reset: 2026-05-03T14:23:00Z.

(https://docs.github.com/rest/overview/resources-in-the-rest-api#rate-limiting)

##[error]Process completed with exit code 1.

The value of X-RateLimit-Reset gives us the earliest time after which, we can refresh the limit. We will discuss this value in more detail later. It is also worth noting that it relates to installations, rather than individual users, so it won’t be impacting personal account limits.

What I tried (and why it didn’t hold up)

In order to fix the problem I went through a few naive fixes before addressing the problem properly.

First, I reduced the number of parallel jobs to two. Initially, this resulted in the 403 response errors disappearing; however, my jobs took 35 minutes to run instead of 5 minutes, which was unacceptable for me with 200 engineers waiting for CI responses.

Additionally, I decided to try wrapping each gh command with a retry loop that would sleep for approximately 30 seconds after an unsuccessful retry. The solution was somewhat effective in preventing API throttling from happening with smaller batches of jobs. But when I had to execute a lot of repos at once, the retry loops would eventually degrade back to the original problem; therefore, I could not effectively solve the underlying issue with my current strategy of managing retries and time delay.

So, I decided to change my approach again. Now, I need to identify a method of synchronizing the jobs to ensure that all jobs will respect the reset timestamp of the API and to identify a way to eliminate any redundant API requests from the jobs during execution.

How to optimize github actions matrix jobs for Scale

If you make some fundamental changes to how your workflows are constructed, you can immediately eliminate any redundant API calls being made.

Consolidating Redundant REST Requests Across Jobs

The quickest solution is to consolidate all requests from each job requesting the same data.When you are retrieving release assets or checking PR labels, it is best practice to do it in one initial job and pass the results as output from the job, or to utilise an artifact.

For example, rather than every Python version job using the GitHub API to obtain a shared binary, I created one setup job that gets the binary and uploads it as an artifact. Hence, the matrix jobs will use the artifact rather than the GitHub API for downloading it. Therefore, instead of having N × matrix API calls, we now only have either 1 call or 2 calls (due to size).

Implementing github actions conditional execution max-parallel

When you cannot avoid calling the API on a per job basis, you need to throttle your jobs as they run concurrently. You can do that by making use of the strategy matrix’s max-parallel key feature that is built into GitHub Actions. It’s not exactly a fancy fix for the issue, but it gives you the ability to control how many concurrent jobs are able to use the token at the same time.

Here is a simplified code example from a workflow that tests Node.js across 4 OS’s and 6 different versions.

jobs:

test:

runs-on: ${{ matrix.os }}

strategy:

max-parallel: 2

matrix:

os: [ubuntu-latest, windows-latest, macos-13, macos-14]

node-version: [16, 18, 20, 21, 22, 23]

steps:

- uses: actions/checkout@v4

- uses: actions/setup-node@v4

with:

node-version: ${{ matrix.node-version }}

Setting max-parallel: 2 means that no more than two jobs will access the API at any one time, helping keep the token’s request count from spiking. The trade-off for this is that the overall wall-clock time of the workflow will increase, but this could be mitigated by implementing some caching.

Reducing Overhead: cache dependencies actions/cache

Reuse of artifacts between builds is a way to minimise unnecessary API retrieving.

Configuring Keys for Matrix-Specific Build Caching

The actions/cache action generates a cache of dependencies that otherwise would have to be downloaded fresh from the GitHub API (or, worse, from other external package registries that will use your rate limit even if authenticated via GitHub). Thus, when there is a cache hit, it means that the step is never reaching out to the network.

I use a key that combines the runner OS, the matrix value, and a hash of the dependency manifest. In this way each matrix cell has its own individual cache but is still accessible during future runs. Below is the YAML that I have created to work with a multi-version Node.js build.

- uses: actions/cache@v4

with:

path: ~/.npm

key: ${{ runner.os }}-node${{ matrix.node-version }}-npm-${{ hashFiles('**/package-lock.json') }}

restore-keys: |

${{ runner.os }}-node${{ matrix.node-version }}-npm-

The hashFiles function generates a unique “stamp” based on your lockfile. So whenever you update a dependency, you will have a new cache, and the obsolete cache will expire. This keeps cache relevant and prevents you from having to download 500 MB of node_modules for each push.

To get more information in the key design process, please refer to the official actions/cache documentation; key design is important when you begin to mix compilers and cross-compilation toolchains.

Purging Stale Caches to Prevent Storage Bottlenecks

While GitHub does indeed have a 10 GB cumulative limit per repository, caches are excellent; however, when you have many older caches, the eviction process might delete a cache that you still have a need for. To avoid this scenario, I employ a scheduled workflow that performs gh cache delete on branches that no longer exist or I delete older caches manually from the Actions > Caches tab when they become cumbersome to handle. While it is an extra maintenance task, it prevents “cache-not-found” issues with future job runs in the matrix.

Custom Polling: wait for api rate limit reset bash script

If you hit a wall with hard limits on the API, you’ll need some sort of workaround, usually through writing scripts.

Querying the GitHub /rate_limit Endpoint

Even with all the structural changes, sometimes large migrations or large scheduled CI builds on a fleet-wide scale can knock you right back against the wall (the ceiling). So, I always keep a little bash code snippet handy to query the API for the rate limit status. It checks what your rate limit is and then grabs the timestamp of when it’ll reset, this way there’s no guessing.

#!/bin/bash

# Check GitHub API rate limit and sleep until reset if we're nearly out

remaining=$(curl -s -H "Authorization: token $GH_TOKEN" \

https://api.github.com/rate_limit | jq '.rate.remaining')

if [[ $remaining -lt 10 ]]; then

reset_epoch=$(curl -s -H "Authorization: token $GH_TOKEN" \

https://api.github.com/rate_limit | jq '.rate.reset')

now=$(date +%s)

sleep_sec=$(( reset_epoch - now + 5 ))

echo "API quota low. Sleeping ${sleep_sec} seconds until reset."

sleep $sleep_sec

fi

I always do this right before I use a gh command or make a curl call straight to the API. It checks what your current count is, and if you are under a certain threshold, in this case, 10, it calculates exactly how long before the limit resets using epoch time. Adding 5 seconds to the calculation is a good idea to ensure you do not end up waking up a second too early and getting another 403.

Developing gh cli rate limit retry logic for Resilience

By using the gh CLI, you are able to talk to the API directly without having to rely on the use of gh command flags, and with that comes the ability to wrap it with your own retry function that respects the x-rate-limit-reset response header. I personally prefer to create a small shell function that I can call instead of manually passing gh flags because it gives me complete control over how I want to implement the backoff.

gh_with_retry() {

local exit_code=0

local output

output=$(gh "$@" 2>&1) || exit_code=$?

if [[ $exit_code -ne 0 && "$output" =~ "API rate limit exceeded" ]]; then

reset_time=$(echo "$output" | grep -oP 'Reset: \K.*')

target_epoch=$(date -d "$reset_time" +%s)

now_epoch=$(date +%s)

wait_time=$(( target_epoch - now_epoch + 5 ))

echo "Rate limited. Sleeping $wait_time seconds..."

sleep $wait_time

gh "$@"

else

echo "$output"

return $exit_code

fi

}

The function inspects the error message returned from the API, extracts the Reset value from it, and then blocks execution until the reset period ends, at which point it will continue to perform additional retry logic. I have deployed this function as part of several nightly batch processing jobs that use many different repositories, and while it is not fail-safe against clock drift, it has been rock solid for CI work. The gh environment documentation provides a thorough explanation of how to pass tokens via environment variables in order to avoid credential leaks.

Managing the github token rate limit ci/cd Pipelines

How you design your repository will affect how easy or difficult it will be to create repositories with parallel testing at high volume.

Switching to Fine-Grained Personal Access Tokens

While the default GITHUB_TOKEN is a good starting point, it only offers 1,000 requests per hour per repository. If your process does many REST calls over a large matrix, it won’t take long before you hit that limit. By switching to a Fine-Grained Personal Access Token, you can extend your rate limit to 5,000 requests per hour (or more if you have an Enterprise plan) because it is based on your user account instead of your GitHub Application. You will also be able to restrict access to exactly what your workflow requires.

Here are instructions for creating fine-grained tokens: 1. In your settings navigate to Developer Settings 2. Click on Personal access tokens 3. Click the Fine‑grained tokens button. 4. Click Generate new token. 5. Select the target repository. 6. Under Repository permissions you will only need to grant Contents: Read and Metadata: Read. You will inherit your user account’s rate limit and not the GitHub Application’s.

You add the token to your workflow’s secrets.

env:

GH_TOKEN: ${{ secrets.MY_FINE_GRAINED_PAT }}

This means that now your matrix jobs no longer compete for the same 1,000-request budget and will not be throttled by each other. Don’t forget to set up a rotation schedule for your tokens since they only last as long as you specify.

Auditing Third-Party Actions for API Inefficiency

Many public actions produce unnecessary API calls. For example, I once traced a mysterious 403 error back to an action that retrieved a list of available repository languages from the API with every execution of my build.To fix the issue, I created a direct API call to GitHub through gh api, an action that gets taken only when a particular condition is satisfied. As well as creating a direct API call, I also put in place cached data, so you do not have to make repeated requests for a result.

Whenever I have doubts about a workflow’s behaviour, I will check the source of that workflow and look for calls to github.api or curl that do not have caching enabled. Any workflow that is simply using an action to download a static binary will typically be changed to use a raw wget request or some other caching mechanism (e.g. actions/cache) to capture that binary after it has been downloaded for the first time. This is a means of performing a one-time audit that ensures you will no longer run into that issue.

Frequently Asked Questions

Here are some common questions that sysadmins may have regarding matrix scaling and limitations for authenticating workflows with matrix builds.

Does authenticating via a GitHub App increase the API rate limit compared to the default GITHUB_TOKEN?

Yes, this will depend on the limit for that App’s installation. Each GitHub App has its own installation limit for how many API requests can be made and this limit is typically set to be 5,000 requests per hour for that installation, shared across all repositories. If you are the owner of the App, you can increase that limit by upgrading to a higher tier plan. For most teams, using a fine-grained Personal Access Token (PAT) from a service account makes more sense and avoids the need to share the rate limit across multiple repositories.

How can I monitor my organization’s remaining GitHub API quota in real‑time?

I typically use a curl command to query the /rate_limit endpoint and I push the results into a dashboard. Additionally, GitHub has an audit log streaming feature that can be used to monitor for rate-limit events. A good way to check quickly is to execute the command gh api /rate_limit --jq '.rate' as part of your script after a significant workflow run.

Will upgrading to GitHub Enterprise automatically resolve matrix installation API throttling?

No, not necessarily. Upgrading to GitHub Enterprise Cloud will provide you with a higher base limit (15,000 requests per hour for the GITHUB_TOKEN in specific plans), but it does not remove the per-repo rate limits. You will still need to aggregate calls, cache responses, and set an appropriate value for the max-parallel setting, especially if you are performing matrix builds that span multiple repositories (possibly hundreds). Therefore, upgrading provides more airspace before you will hit the per-repo limits on requests made through the GITHUB_TOKEN.